|

Hi, I'm Neehal! I am a first-year PhD student at MIT CSAIL, advised by Prof. Daniela Rus. I also work at Liquid AI on scaling efficient foundation models. My research interests broadly lie in efficient architecture design for autoregressive sequence modeling, particularly LLMs. Within this space, I primarily focus on linear recurrent architectures as a subquadratic alternative to attention-based ones. I am interested in pushing the Pareto-frontier of these recurrent primitives and building an ecosystem to support them for large scale model training and inference. I graduated from Harvard in 2024 with an SM in Computer Science and an AB in Mathematics and Computer Science. During my undergrad, I was fortunate enough to work with Prof. Nir Shavit and Prof. Finale Doshi-Velez. Email / Google Scholar / Twitter / GitHub / LinkedIn |

|

|

|

|

|

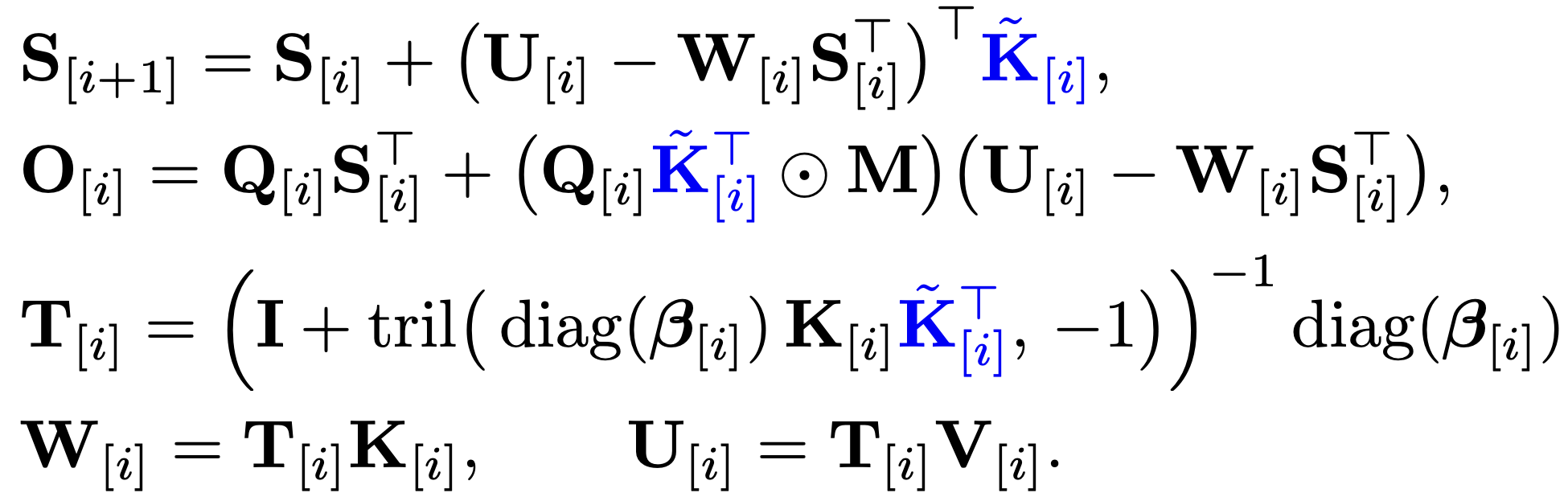

Neehal Tumma*, Noel Loo*, Daniela Rus ICML, 2026 paper / code / tweet We show that with least-squares preconditioning, DeltaNet and linear attention reduce to the same recurrence. Using this insight, we introduce preconditioned variants of DeltaNet (PDN), Gated DeltaNet (PGDN), and Kimi Delta Attention (PKDA), demonstrating gains across synthetic recall benchmarks and language modeling at the 340M and 1B scale. |

|

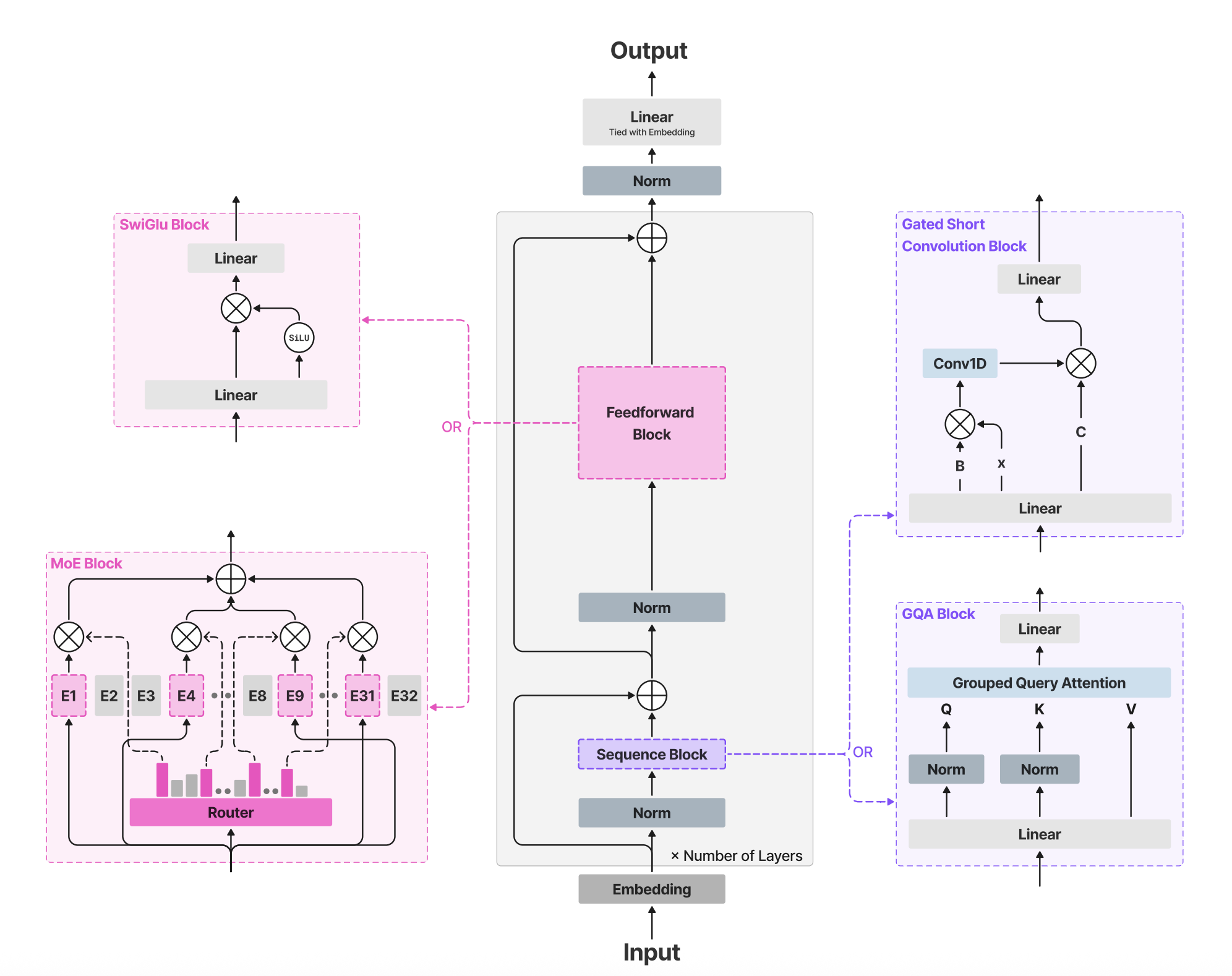

Liquid AI Team arXiv, 2025 paper / tweet We introduce LFM2, a family of compact foundation models (350M–8.3B parameters) optimized for edge deployment. Using hardware-in-the-loop architecture search, LFM2 achieves up to 2x faster prefill and decode on CPUs compared to similarly sized models, with extensions to vision-language, speech, and retrieval. |

|

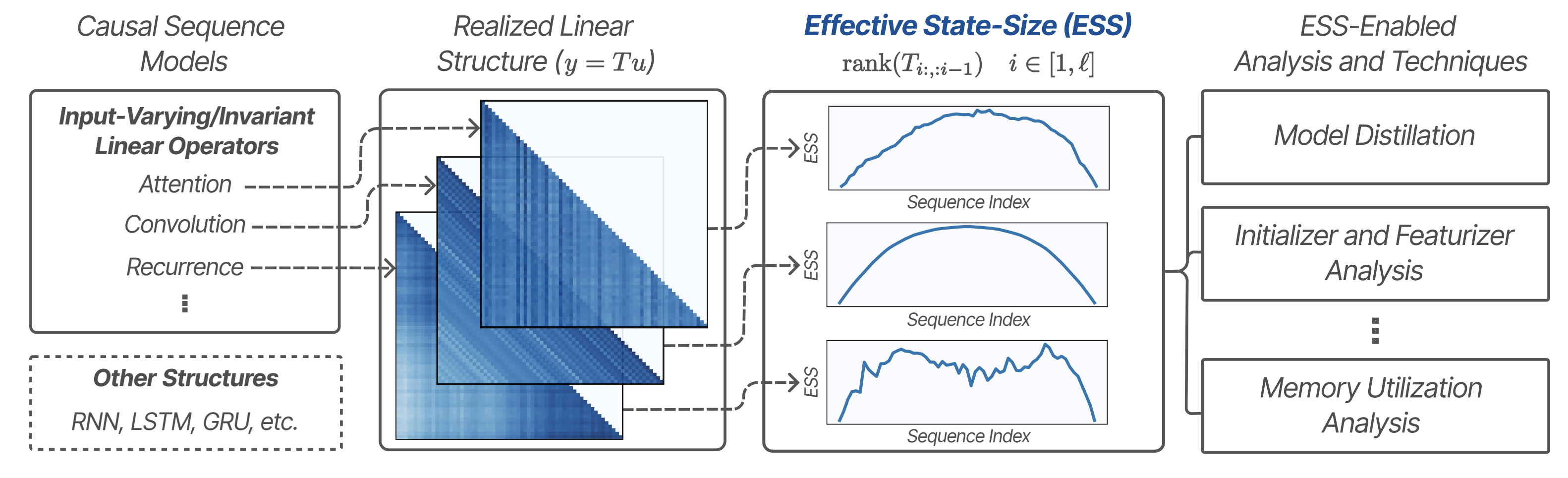

Rom N. Parnichkun*, Neehal Tumma*, Armin W. Thomas, Alessandro Moro, Qi An, Taiji Suzuki, Atsushi Yamashita, Michael Poli, Stefano Massaroli ICML, 2025 paper / tweet We introduce effective state-size (ESS), a principled metric grounded in signal processing and control theory for quantifying how sequence models utilize memory. ESS provides interpretable insights into memory dynamics across attention, convolutions, and recurrent architectures, enabling improved initialization, regularization, and distillation. |

|

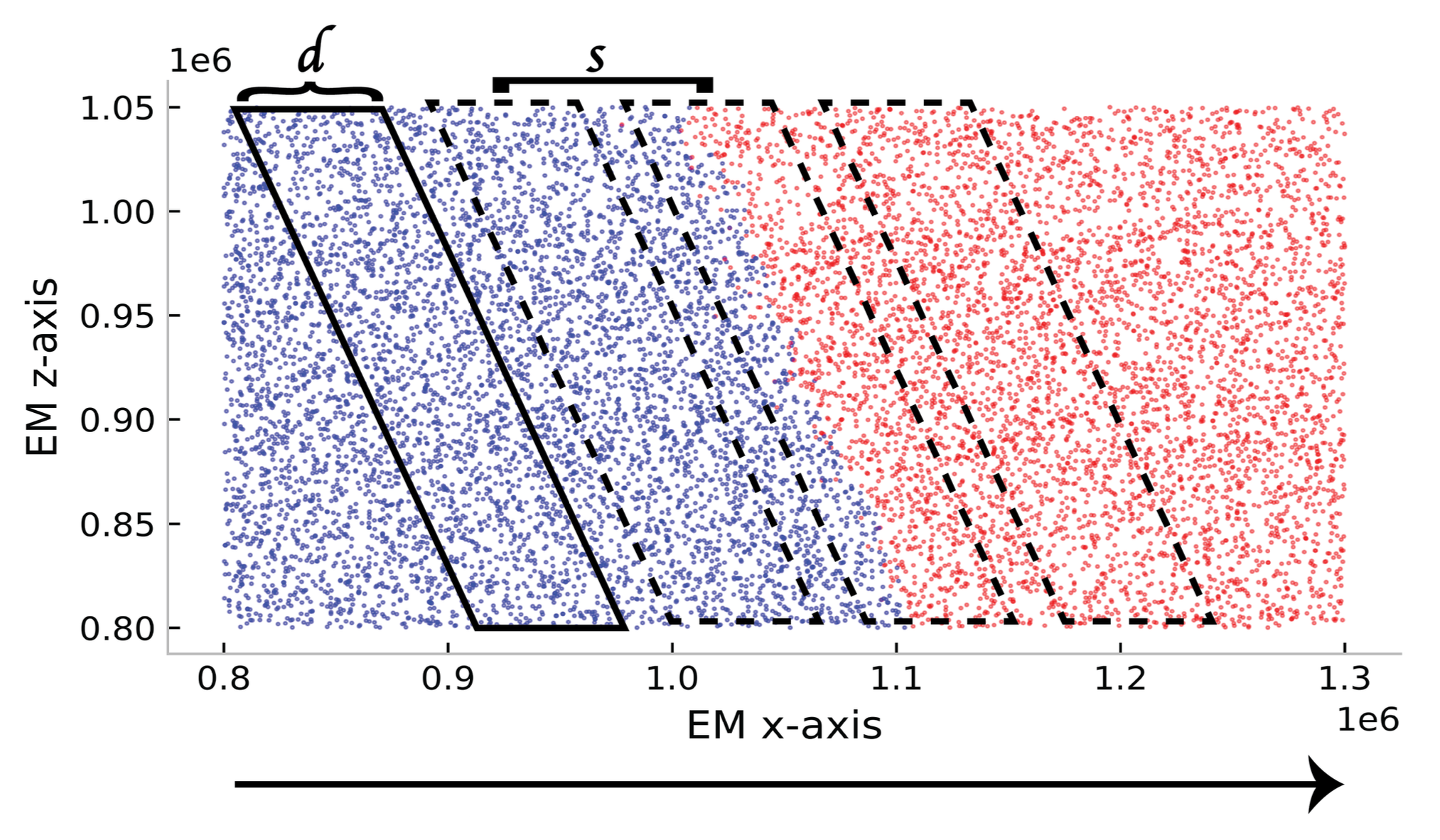

Neehal Tumma*, Linghao Kong*, Shashata Sawmya, Tony Tong Wang, Nir Shavit Neural Networks, 2025 paper We propose a statistical sliding window framework for analyzing neural connectivity in large-scale connectomics datasets using both the connectivity graph and functional activations. Applied to the MICrONS dataset, we find that the V1-RL border region exhibits greater synaptic connectivity and more synchronous activity, acting as a bridge between visual areas. |

|

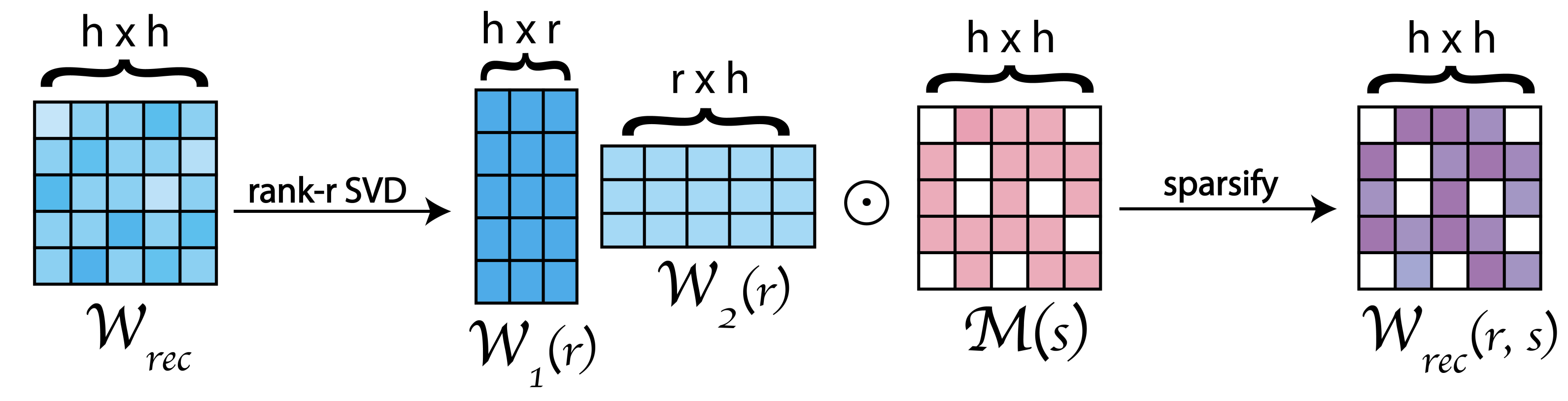

Neehal Tumma, Mathias Lechner, Noel Loo, Ramin Hasani, Daniela Rus ICLR, 2024 (Spotlight, top 5% of submissions) paper / tweet We explore how the recurrent connectivity of RNNs, parameterized by rank and sparsity, influences robustness in closed-loop control. We show that low-rank, sparse connectivity induces desirable network dynamics, enabling closed-form continuous-time networks (CfCs) with fewer parameters to outperform their full-rank counterparts under distribution shift. |

|

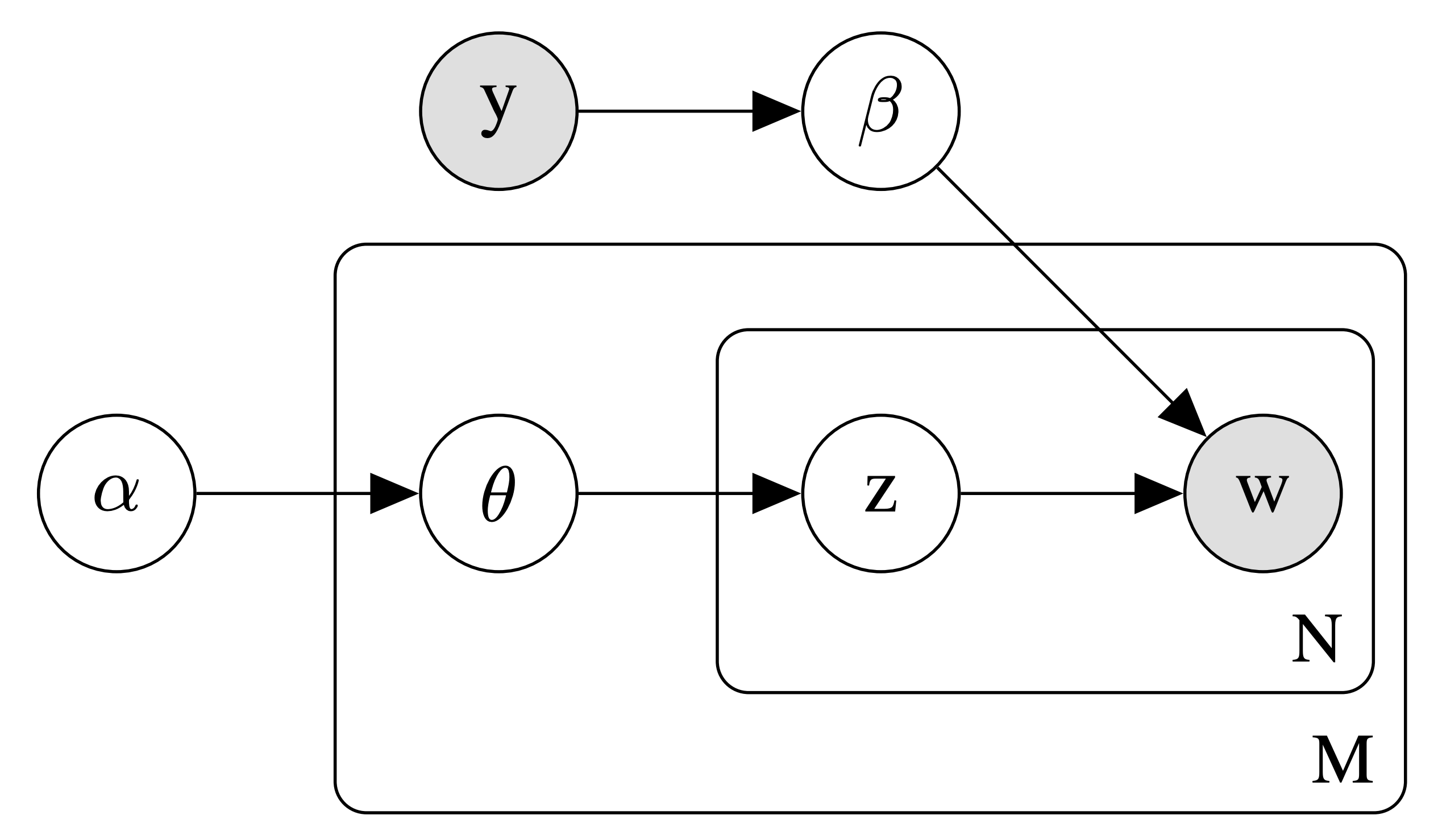

Jeffrey Chiu*, Rajat Mittal*, Neehal Tumma*, Abhishek Sharma, Finale Doshi-Velez ACL SPNLP, 2022 paper We introduce the Label-Indexed Neural Topic Model (LI-NTM), the first effective upstream semi-supervised neural topic model. LI-NTM outperforms existing neural topic models in document reconstruction, with the strongest results in low labeled data regimes. |